WR 74: AI almost convinced everyone Uber Eats is extra evil

Weekly Reckoning for the week of 12/1/26

Welcome to the Tech Reckoner! New year, new name, new logo. But mostly the same format, don’t worry. (If you missed the announcement, check out last week’s post.) In this Weekly Reckoning, we cover the AI-assisted hoax that convinced the Internet that food delivery companies are (even more) evil, Utah’s interesting take on tech policy, and Iran’s ongoing Internet black-out. Then, my thoughts on AI governance fellowships, and a guide for people applying for them.

This edition of the WR is brought to you by… the iPad Chrome/Apple Notes duo (not recommended for Substacking)

WR 73: New year, new newsletter (plus the news of the day)

Welcome back to the Ethical Reckoner. Welcome to the Tech Reckoner! That’s right, we’re rebranding. The Ethical Reckoner has been around for almost five years now, and I felt it was time for a revamp to capture what the newsletter has evolved to be, including this weekly format. So starting from the next edition, this newsletter will be the

The Reckonnaisance

AI-aided food delivery company hoax fools the Internet

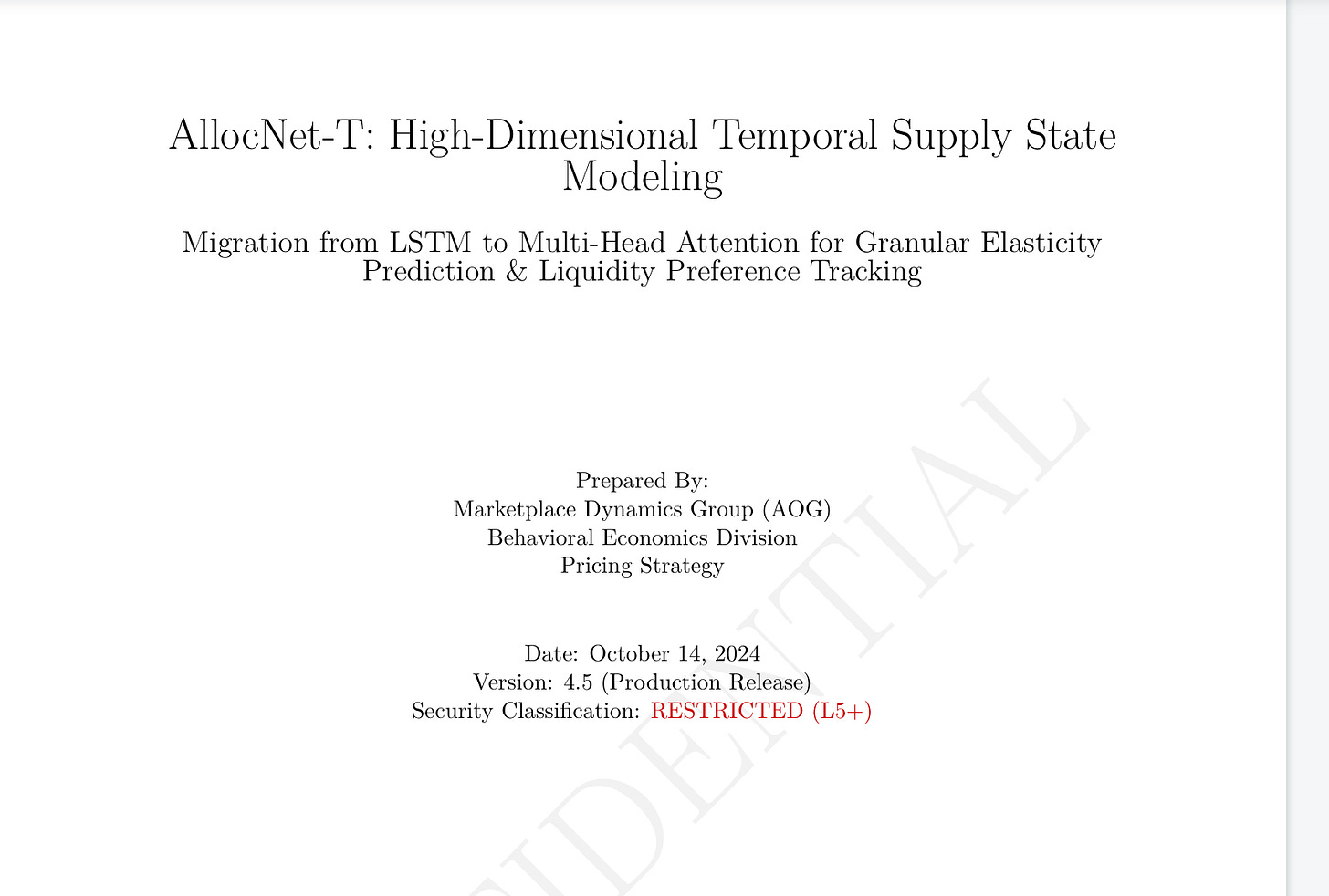

Nutshell: A viral Reddit post detailing various ways a major food delivery company abused workers was revealed to be supported by AI-generated documents.

More: The post on Reddit garnered tens of thousands of upvotes—including one from yours truly. It alleged that the unnamed company used “driver benefit fees” to fund lobbying, that they calculated a “desperation score” that they use to lower payments to drivers, and other nasty practices (using your predicted tip to lower base pay, delaying non-priority orders instead of speeding up priority ones, etc.). Everything sounded true enough that it got massive attention (enough to garner responses from DoorDash and Uber Eats) but when journalists started digging into it, things started to get fishy. The whistleblower started sending a picture of an Uber Eats employee badge and a technical paper from the “Marketplace Dynamics Group” that conveniently confirmed every claim he made in the paper. Casey Newton seems to have cracked it first, realizing that the whistleblower had used Gemini to create the badge (ironically based on an NBC News reporter’s badge that was sent to him to establish trust) and that the whole paper was likely AI-generated. Once more questions started being asked, the whistleblower disappeared.

Why you should care: It’s unclear what this person’s goal was—bring down Uber Eats? Entertain themselves over the holiday?—but as the old adage goes, “a lie makes it halfway around the world before the truth has time to lace up its boots.” We can craft good old-fashioned fake Reddit posts without AI, and the most this probably did was make some people more skeptical of food delivery in general (which has well-documented abusive practices, just not these ones), but if journalists hadn’t sniffed out the fake documents, Uber Eats would have had a massive PR scandal on their hands and we would be facing even greater questions about the future of news and sourcing than we already are. But the next time something like this happens, at a greater level of sophistication, we may not be so lucky.

Utah calibrates tech policy approach

Nutshell: Utah is restricting screen time in schools while also piloting AI for renewing prescriptions.

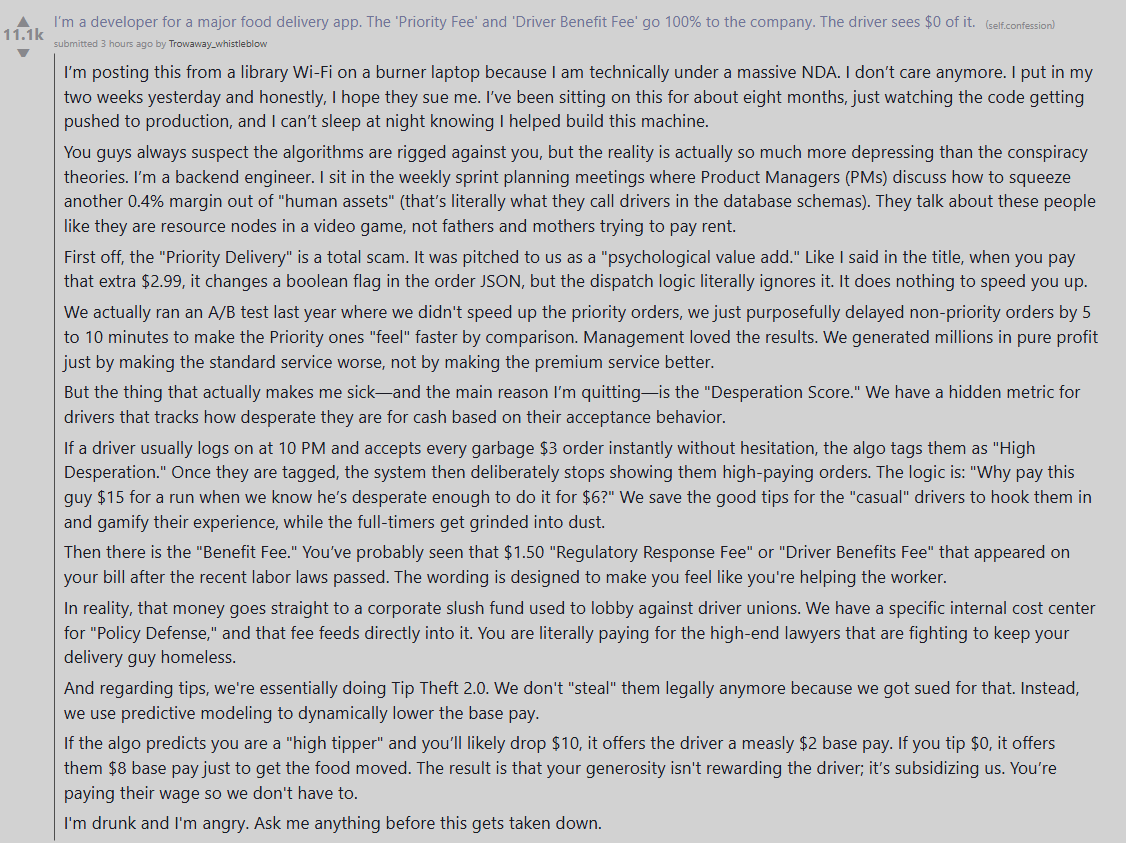

More: In 2025, Utah passed a bill restricting the use of cell phones in schools during class time, but now is proposing to extend the ban to the entire “bell-to-bell” school day. Another bill would set statewide limits on classroom screen time (presumably edtech and movies), most restrictive for kindergarten through third grade and then gradually reducing to increase tech exposure as kids get older. These restrictions have been criticized for not being backed by science, but parents are broadly in favor, and Utah has stepped up to continue the a real-time experiment.

Meanwhile, reports of Utah allowing AI to prescribe medications are slightly exaggerated; it’s a pilot program allowing an AI system to handle prescription refills for commonly prescribed drugs; painkillers, injectables, and ADHD drugs are included, presumably to crack down on potential abuse. The company claims a 99.2% agreement rate with doctors, and that it escalates to a doctor if there’s any uncertainty. Politico raises two concerns:

Misuse/abuse, including people struggling with addiction gaming the system to obtain drugs

Missing drug interactions and clinical red flags

The first can presumably be handled by limiting it to only relatively innocuous drugs, while the second is something that can be handled by a rules-based system (if patient is on medication Y, don’t allow a refill of Y; if patient reports symptom A, escalate to a doctor). Incorporating AI into healthcare is a huge risk, but if the safeguards work as they say they do, this may be a relatively good way to make patients’ lives easier. But if anyone jailbreaks this, you bet we’ll write about it.

Why you should care: Utah is embodying what we could call action-oriented conservative tech policy. Child protection is admittedly a bipartisan issue, but most of the states that have implemented bell-to-bell school cell phone bans (of the kind Utah is trying to implement) are red states.

Source: ABC News

Conservatives have also been pushing pro-innovation policies, and Utah’s prescription regulation is an application-forward way to promote AI integration. My bet: more states will follow. (Also, if anyone wants to do an analysis of Mormon views on tech policy, please reach out.)

Iran under Internet blackout

Nutshell: Iran was under an “unprecedented” Internet blackout as anti-government protests continued.

More: After a currency collapse in late December, protesters took to the streets. Protests seem to be widespread, amidst an intense crackdown that’s left over 200 people dead (update: the death toll is now reportedly over 3,700) and thousands more in police custody, but it’s hard to determine the extent of the protests because the government shut down the internet on January 8, even blocking international calls to the country. However, government Telegram accounts have continued to post, indicating a more sophisticated blackout than before. Some videos have trickled out—in part because some activists have Starlink—but as is, Iran is largely shrouded from the world.

Why you should care: Internet blackouts have two goals—keep information from getting out to the world, and keep people inside from coordinating. This is proving largely effective at the former—although the absence of information itself creates a media story—and it’s unclear about the latter, although the fact that the protests seem to be continuing indicates that it may not be very effective at that. At a certain point, protesters don’t need to be coordinating (when everyone’s out on the street, you don’t need to text them), but whether we’re at that tipping point remains to be seen.

It’s also important to note that the blackout has an enormous human toll, both within Iran and outside it. As my friend Yasaman, who has family in Iran, points out, it’s easy for those of us in the liberal democratic world to assume that everyone is connected, just like it’s easy for us to forget that there’s over 2 billion people in the world who still lack access to safe drinking water. I’ll leave you with her words:

“In our connected world, imagine not being able to even call your loved ones. Imagine having to pretend every day that everything is fine, because the reality you live in, is different from the reality you were born in, and from your homeland. As a researcher, I’m sick of talking about fairness, equality, and AI misuse only theoretically, and with the privilege of living in a democratic reality... spread their voice and call for freedom.”

Extra Reckoning

AI governance fellowships are really great kickstarters for AI policy careers. But they’re becoming more and more competitive—they’re getting thousands of applications for dozens of slots—and so it’s a bit of a catch-22, because the most competitive applicants already have some amount of AI policy research experience, often through a master’s degree or another fellowship. With that in mind, it can seem hopeless, but it’s not.

It’s important to remember that different fellowships serve different purposes. There are at least two kinds of fellowships: individual mentorship and cohort-based. Individual mentor programs involve working closely with a specific mentor on a research project or other output. Cohort-based ones usually involve training of some sort and sometimes research as well. I’ve participated in one of each; the Future Impact Group runs the FIG Fellowship where you can apply to work with a mentor on a part-time project; Talos is a cohort-based fellowship focused on European AI policy. (These aren’t exclusive distinctions; the FIG cohort is also amazing, and many Talos fellows continue to a placement where they might be doing research.) If you don’t have much research experience yet, a cohort-based one can help you figure out your research interests and potentially serve as a foot in the door for further research or other work in the field.

Now, I’m a mentor for the Pivotal fellowship, where you can apply to work with a mentor on an AI governance research project; I’m excited to be on the other side. But since I’ve seen both sides, I know how these fellowships can be confusing to navigate, and there are a lot of them popping up. I’ve put together a document with information about all the fellowships I know about, plus some advice for applicants. If there are fellowships you heard of that I don’t have listed, please let me know! By the same token, if you have any other advice, jot down a comment.

I Reckon…

that the Sunday roast should be a thing outside the UK.

Thanks for making things clearer for laypeople!

Update: thanks to the iPad, the fellowship Google doc wasn’t linked correctly—should be fixed now!